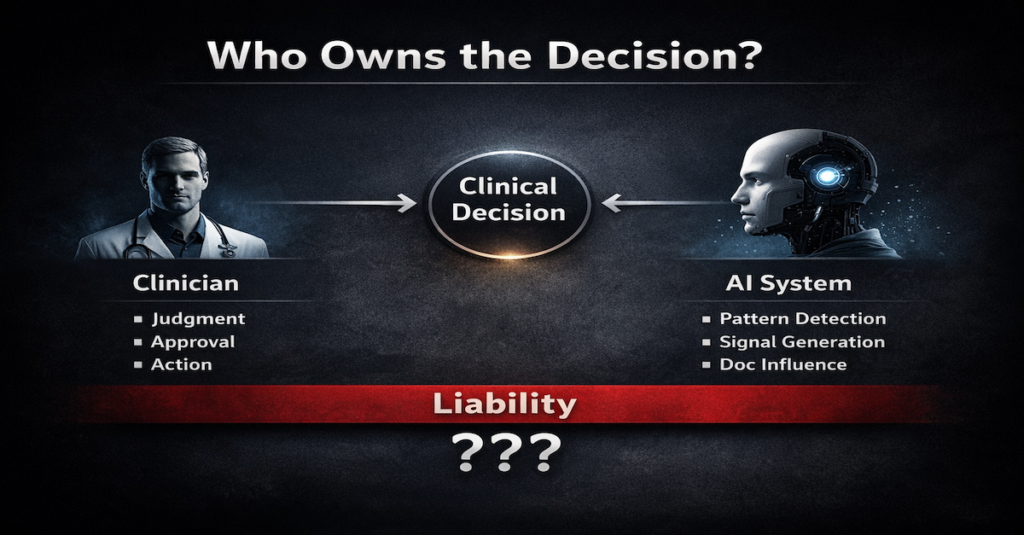

If AI Sets the Standard… Who Owns the Decision?

Yesterday I introduced the idea of an AI-Adjusted Standard of Care.

If that’s true, there’s a second question that follows:

If AI raises the standard…

who is actually responsible when something goes wrong?

Because the reality is:

AI does not make decisions in isolation.

It:

- surfaces information

- structures documentation

- influences how clinicians think

But the clinician is still the one:

- reviewing

- approving

- acting

So where does responsibility sit?

If a clinician ignores an AI-generated signal—

is that negligence?

If they follow it—and it’s wrong—

is that still their decision?

We’re entering a space where:

Decision-making is shared

But accountability is not

And that creates tension.

Because the legal system still assumes:

A clearly identifiable decision-maker.

That assumption may no longer hold.

If AI helps define the standard of care…

it inevitably reshapes responsibility as well.